This page contains the opening portion of Chapter 15 of RAFT 2035.

Copyright © 2020 David W. Wood. All rights reserved.

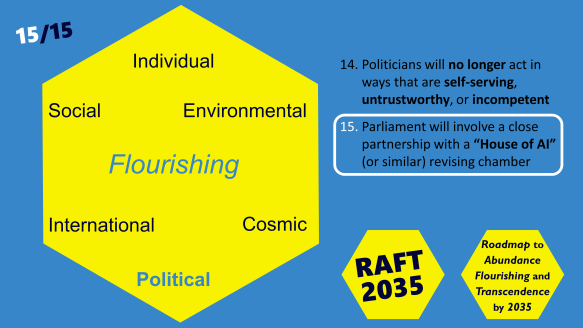

15. Politics and AI

Goal 15 of RAFT 2035 is that parliament will involve a close partnership with a “House of AI” (or similar) revising chamber.

To restate the goal: politics will benefit from a close positive partnership with enhanced Artificial Intelligence.

Politics features both the strengths and the weaknesses of humans, writ large. When we sometimes complain that our politicians are stupid or selfish, we should remember that humans in general can be stupid and selfish – as well as, on occasion, being wise and selfless. The difference is in the degree of power that politicians can possess.

The dangers of power

Power tends to corrupt, warned Lord Acton, the nineteenth century historian and politician. Absolute power corrupts absolutely. Power can corrupt the clarity of a politician’s thinking, and their sense of moral duty. It can lead them to forget their social ties to their fellow citizens. It can cause them to imagine themselves as being particularly worthy and deserving. It’s little wonder that initially admirable politicians often go downhill over time.

If power tends to corrupt, what is truly worrying is that never before have we humans held so much power in our hands. Science and technology are providing us with spectacular capabilities. We face the threat of unprecedented large scale surveillance and manipulation by forces seeking undue influence. This manipulation can be subtle rather than blatant. That’s what gives it greater power. New technology also strengthens those who would wield fake news and other black-art psychological techniques to frighten or incite people into making choices that are different from their actual best interests. All this raises the spectre of politicians taking and holding power more vigorously than ever before.

Checks and balances, under threat

In principle, what limits politicians from abusing power is the set of checks and balances of a democratic society: separation of powers, a free press, independent courts, and regular elections. The effectiveness of elections depends, however, on electors being able to see matters sufficiently clearly, and to assess scenarios objectively.

Too often, alas, we electors prefer to mislead ourselves into believing comforting untruths and half-truths. We prefer reassuring slogans over an awareness that matters are actually much more complicated in reality. We use our intelligence, not to find out what is the true situation, but to find rationales that justify whatever we have already decided we want to do. We tend not to care much whether these rationales and slogans are sound. We care more that these slogans bolster our self-image, and raise our perceived importance inside the groups of people with whom we seek to identify. That’s because we are, thanks to human nature, motivated too often by fear and by vanity.

Clever social media communications seek to push us into emotional reactions rather than careful deliberation. With our hearts on fire, smoke gets in our eyes. With our emotions inflamed, online interactions frequently propel us to champion tribal instincts. With a heightened sense of the importance of group identity, we cheer on pro-group “blue lies” rather than respecting objective analysis. Afraid of having to admit we were previously mistaken, we double down on our convictions, in effect throwing good money after bad. We may succeed in ignoring reality – for a while, until reality bites back, with a vengeance.

Two sets of tools are available to prevent us continuing to misuse our individual human intelligences:

- Collective intelligence, where people help each other to reason more thoughtfully.

- Artificial intelligence, with automated reasoning and data analysis.

Both these sets of tools will be deeply important in the years ahead. The tools interact, raising possibilities for faster progress. At the same time, we need to be aware that these tools are, in their own way, capable of bad outcomes too:

- Collective intelligence can produce collective stupidity.

- AI can help people and corporations pursue dangerous goals more quickly than ever before.

We’ll need to keep our wits about us!

The rise of AI

AI systems are quickly increasing in use around the world as decision support tools. For example, they provide support for medical decisions, legal reviews, assessment of credit worthiness, identifying the most suitable candidates for employment, and suggesting new partners for romance and intimacy. Software tools can highlight mistakes in spelling and grammar, awkwardness in style, and wording that is more likely to be effective for particular audiences. Software tools can also flag up instances of mistaken facts, questionable sourcing, and the likelihood that some material, such as a video, has been fabricated or manipulated from its original content.

Before long, AI and other decision support tools should be able to provide very useful analysis and validation of political statements, including legislative changes that politicians are proposing. AI could identify potential problems with legislation sooner, and suggest creative new adaptations and syntheses of earlier ideas. AI can also alert us when we are becoming tired, bigoted, or selective in our use of evidence, and can recommend more fruitful ways to continue a discussion. This AI, therefore, could help us to become, not only cleverer, but also kinder and more considerate.

However, AI systems are prone to various amounts of bias, misunderstanding, quirks, and other faults – some of which are very serious. People who use these tools sometimes put too much trust in them, without independently assessing their recommendations. Another risk, identified by writer Jamie Bartlett as “the moral singularity”, is that people will lose their ability to take independent hard decisions, through lack of practice, on account of delegating more and more decisions to AI systems. With atrophied moral intuitions, people will unintentionally become dominated by the moral decisions made on their behalf by machine intelligence.

<snip>