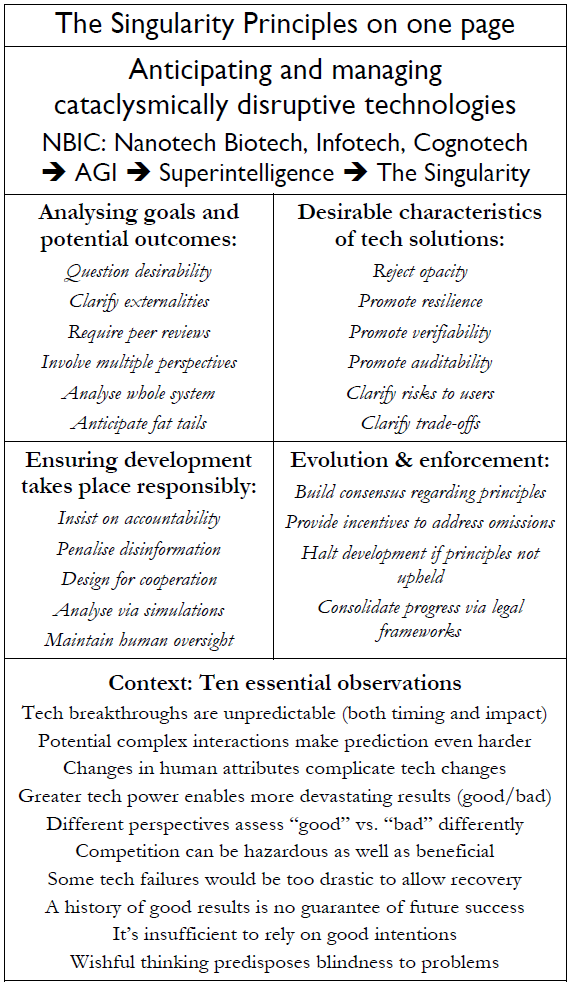

The Singularity Principles

Anticipating and managing cataclysmically disruptive technologies

Book availability

Ebook (Kindle) – Published on 4th July 2022

Paperback – Published on 28th July 2022 – ISBN 978-0-9954942-6-8

Audio – Published on 4th August 2022

Endorsements for The Singularity Principles

“An excellent and detailed introduction to the AI Safety problem, the Singularity, and our options for the future.”

– Roman Yampolskiy, Professor of Computer Science, The University of Louisville

“It truly is gratifying to see deep thought on these crucial issues.”

– David Brin, Science fiction author and scientist, Winner of Nebula, Locus, Campbell, and Hugo Awards

“One of the most important books that you will read this decade, which David Wood calls ‘The Decade of Confusion’. The 2020s bring us to the cusp of a transformation in human civilization quite unlike any we have previously experienced. David’s book is an essential handbook to aid you in navigating this transformation.”

– Ted Shelton, Expert Partner, Automation and Digital Innovation, Bain and Company

“The Singularity Principles is an absorbing, comprehensive overview of the challenges I truly believe we will soon face as AGI arrives. Are we intellectually and emotionally ready to have serious discussions about it?”

– Patty O’Callaghan, Google’s Women Techmakers Ambassador

“”A unique, comprehensive and principled framework for riding the tsunami of change coming our way, instead of drowning in it.”

– Nikola Danaylov, Founder of Singularity Weblog and Singularity.FM

“David’s new book is both amazing and insane (in the best possible way).”

– Dalton Murray, Lead Contributor to H+Pedia and the Vital Syllabus

“No Solarpunk or regenerative movement activist should ignore this brilliant futurist’s book on the Singularity. It is neither techno-fetishist nor in denial of its coming!”

– George Pór, Founder and Director of Research, Future HOW

“I have followed David’s work for nearly twenty years. It’s great to see him taking his thinking to the next level. AGI is arriving faster than many expected. It’s vital to raise the calibre of the discussion on AGI and on the Singularity. That’s what this timely, valuable book achieves.”

– Ajit Jaokar, Visiting Fellow and Course Director, Artificial Intelligence: Cloud and Edge Implementations, University of Oxford

“David Wood is a man of great intellect which shines through in this erudite analysis of the potential benefits and costs of cataclysmically disruptive technologies. David’s Singularity Principles provide a strong basis for the critical tasks of the anticipation and management of alternative outcomes. I commend this book most warmly to friends and colleagues alike.”

– Hugh Shields, Chairman and Founder, The Centre for Research into AI and Mankind

“David’s book provides a powerful overview of what’s ahead of us, and thoughtfully describes the opportunities and challenges brought on by the rise of exponential technologies. A must-read for anyone that is looking to increase their Future-Readiness.”

– Gerd Leonhard, Futurist, CEO The Futures Agency, Author and Film Maker, The Good Future

“Various world disruptions are escalating in a way that begs the thought ‘if only we had started serious work on this decades ago’. The pain in years to come will be more severe or catastrophic if we continue as we have. Conversely, if we actively manage things the reward is significantly greater human flourishing. The Singularity Principles form a good framework of behaviours to produce better outcomes than we get from development as currently run. They should be brought to the attention of anyone wishing to improve the way they do things.”

– Peter Jackson, Software Consultant, change management and configuration management

“Black box AIs are being spawned every week and as much money and computer power that is available is being poured into them as fast as possible. We are deep into the rapids and heading for the vertical chute. Is there still time to educate the public? This book needs to be read by a wide audience immediately – especially by those few people who have actual control over the final few steering manoeuvres remaining for AI development.”

– Jonathan Cole, Radiative Cooling Engineer, technologically advanced canopies and systems

“I have just finished reading the manuscript of The Singularity is Nearer to be published in 2023 by my friend Ray Kurzweil. Kurzweil’s new book is very optimistic and ratifies his forecast to reach the technological singularity by 2045. In order to have a positive singularity and flourishing human future, I also highly recommend reading The Singularity Principles so that we avoid most of the hellish scenario and achieve most of the heavenly scenario. David Wood has scored another fantastic book, full of brilliant ideas and great examples.”

– José Luis Cordeiro, Founding Faculty, Singularity University

“This really is an urgent call to action #AIforGood.”

– David Levin, University Entrepreneur in Residence, Arizona State University, and Supervisory Board Chairman, The Learning Network

“David Wood regularly features as a panellist at World Talent Economic Forum events. I always enjoy his contributions, particularly his insights about the future of AI, which you can read about in The Singularity Principles.”

– Sharif Uddin Ahmed Rana, Founder and President, World Talent Economy Forum

“We are witnessing disruptive technologies with an ever-increasing rate of emergence and societal impact. The rise of Artificial General Intelligence presents the possibility of both the most profound benefits and dangers to humanity. There are no historical precedents; no magic formulae to guarantee success. However, The Singularity Principles can guide us as we manage these potent technologies and produce a desired beneficial future.”

– David Shumaker, Director of Applied Innovation, US Transhumanist Party & Transhuman Club

“This important book shines light on looming, potentially irreversible, threats of AI, counterbalanced with pragmatic guidance towards a vision rich with AI opportunities. David Wood provides a prescient call to action, raising awareness and cutting through the confusion.”

– Alan Boulton, Senior Software Engineer, Socionext Europe GmbH

“I cannot stress strongly enough how important this book is – for our own mental health, but also for the health of our species. Real challenges are fast approaching. How we deal with them collectively will mean the difference between a very bright future or a potential cataclysm. Whilst the latter does not sound very hopeful, the path to the former is well discussed, which gives me real hope that humankind could have a very bright future indeed!”

– Iain Beveridge, Director, Telepresent

“Bar none, David is the most well-informed futurist I know! His book deserves to be a best-seller.”

– Matt O’Neill, Futurist Keynote Speaker, Futures Workshops, Creative Communicator

Extract from the Preface

This book is dedicated to what may be the most important concept in human history, namely, the Singularity – what it is, what it is not, the steps by which we may reach it, and, crucially, how to make it more likely that we’ll experience a positive singularity rather than a negative singularity.

For now, here’s a simple definition. The Singularity is the emergence of Artificial General Intelligence (AGI), and the associated transformation of the human condition. Spoiler alert: that transformation will be profound. But if we’re not paying attention, it’s likely to be profoundly bad.

Despite the importance of the concept of the Singularity, the subject receives nothing like the attention it deserves. When it is discussed, it often receives scorn or ridicule. Alas, you’ll hear sniggers and see eyes rolling.

That’s because, as I’ll explain, there’s a kind of shadow around the concept – an unhelpful set of distortions that make it harder for people to fully perceive the real opportunities and the real risks that the Singularity brings.

These distortions grow out of a wider confusion – confusion about the complex interplay of forces that are leading society to the adoption of ever-more powerful technologies, including ever-more powerful AI.

It’s my task in this book to dispel the confusion, to untangle the distortions, to highlight practical steps forward, and to attract much more serious attention to the Singularity. The future of humanity is at stake.

Let’s start with the confusion.

Confusion, turbulence, and peril

The 2020s could be called the Decade of Confusion. Never before has so much information washed over everyone, leaving us, all too often, overwhelmed, intimidated, and distracted. Former certainties have dimmed. Long-established alliances have fragmented. Flurries of excitement have pivoted quickly to chaos and disappointment. These are turbulent times.

However, if we could see through the confusion, distraction, and intimidation, what we should notice is that human flourishing is, potentially, poised to soar to unprecedented levels. Fast-changing technologies are on the point of providing a string of remarkable benefits. We are near the threshold of radical improvements to health, nutrition, security, creativity, collaboration, intelligence, awareness, and enlightenment – with these improvements being available to everyone.

Unfortunately, these same fast-changing technologies also threaten multiple sorts of disaster. These technologies are two-edged swords. Unless we wield them with great skill, they are likely to spin out of control. If we remain overwhelmed, intimidated, and distracted, our prospects are poor. Accordingly, these are perilous times.

These dual future possibilities – technology-enabled sustainable superabundance, versus technology-induced catastrophe – have featured in numerous discussions that I have chaired at London Futurists meetups going all the way back to March 2008.

As these discussions have progressed, year by year, I have gradually formulated and refined what I now call the Singularity Principles. These principles are intended:

- To steer humanity’s relationships with fast-changing technologies,

- To manage multiple risks of disaster,

- To enable the attainment of remarkable benefits,

- And, thereby, to help humanity approach a profoundly positive singularity.

In short, the Singularity Principles are intended to counter today’s widespread confusion, distraction, and intimidation, by providing clarity, credible grounds for hope, and an urgent call to action.

This time it’s different

I first introduced the Singularity Principles, under that name and with the same general format, in the final chapter, “Singularity”, of my 2021 book Vital Foresight: The Case for Active Transhumanism. That chapter is the culmination of a 642 page book. The preceding sixteen chapters of that book set out at some length the challenges and opportunities that these principles need to address.

Since the publication of Vital Foresight, it has become evident to me that the Singularity Principles require a short, focused book of their own. That’s what you now hold in your hands.

The Singularity Principles is by no means the only new book on the subject of the management of powerful disruptive technologies. The public, thankfully, are waking up to the need to understand these technologies better, and numerous authors are responding to that need. As one example, the phrase “Artificial Intelligence”, forms part of the title of scores of new books.

I have personally learned many things from some of these recent books. However, to speak frankly, I find myself dissatisfied by the prescriptions these authors have advanced. These authors generally fail to appreciate the full extent of the threats and opportunities ahead. And even if they do see the true scale of these issues, the recommendations these authors propose strike me as being inadequate.

Therefore, I cannot keep silent.

Accordingly, I present in this new book the content of the Singularity Principles, brought up to date in the light of recent debates and new insights. The book also covers:

- Why the Singularity Principles are sorely needed

- The source and design of these principles

- The significance of the term “Singularity”

- Why there is so much unhelpful confusion about “the Singularity”

- What’s different about the Singularity Principles, compared to recommendations of other analysts

- The kinds of outcomes expected if these principles are followed

- The kinds of outcomes expected if these principles are not followed

- How you – dear reader – can, and should, become involved, finding your place in a growing coalition

- How these principles are likely to evolve further

- How these principles can be put into practice, all around the world – with the help of people like you.

Click here to read the entirety of the Preface.

A full listing of the table of contents can be found here (including links to copies of each chapter).

Credits

The graphic illustration on this page includes a design by Pixabay member Ebenezer42.